Education

source: Google

source: Google

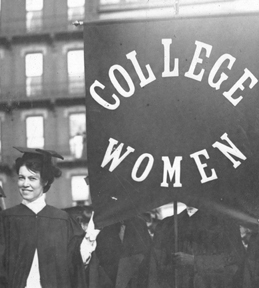

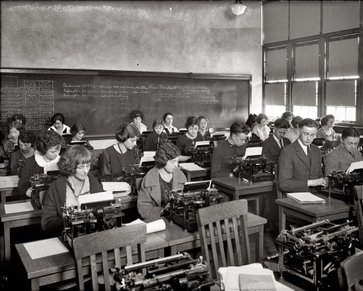

Most women were not educated before the 1920s. Women were strictly involved with taking care of the children and home. During World War I women took on the jobs of men while men were at war. Once the war was over they were pushed back into the home. They had no voice in society, and men spoke for them. This all changed drastically through education. Women began attending college! This was a huge success that led to better job opportunities. Education became an essential tool for most women. This opened doors for them to make good money, and money means power. Teenagers were able to move out of their parents’ home and have more freedom as a result of this new financial power. Teaching, nursing and social work became an area of expertise for most women. Education also became a way for women to value themselves. This also gave them the independency to do what they want financially such as paying their own bills, taking vacations, and buying their own clothes. Education gave women confidence to do as they please. Education gave birth to many famous poets, writers, and artists. Being able to attend college gave women an opportunity to improve in these areas.